Imagine walking into a crime scene where the most important clues disappear the moment you turn off the lights. That is exactly how volatile data behaves inside a computer system. The moment power disappears, much of this information vanishes with it.

In digital investigations, timing often determines whether evidence survives or disappears forever. Many investigators focus on files stored on disks, but some of the most valuable clues never touch the hard drive. They live briefly inside system memory, active sessions, and running processes. That short-lived information is known as volatile data.

Understanding how this data works can completely change the way an investigation begins. Instead of shutting down a machine immediately, investigators often pause and ask one simple question. What valuable information might disappear if the system powers off?

What Volatile Data Actually Means?

In digital forensics, volatile data refers to information that exists temporarily while a system is powered on. Once the device shuts down or restarts, that information usually disappears.

Think of it like writing notes on a whiteboard during a meeting. While the meeting is happening, the board holds useful context. The moment someone wipes it clean, those notes are gone unless someone captured them earlier.

A computer behaves in a similar way. While it runs, the operating system constantly stores information in temporary memory locations. These areas hold active instructions, user sessions, encryption keys, and network activity. They allow programs to run smoothly and quickly.

Unlike files stored on a hard drive, this information was never meant to stay permanently. It exists only while the system is alive. That temporary nature is exactly why volatile data can hold extremely valuable clues during security investigations.

Where Volatile Data Exists in a Computer System?

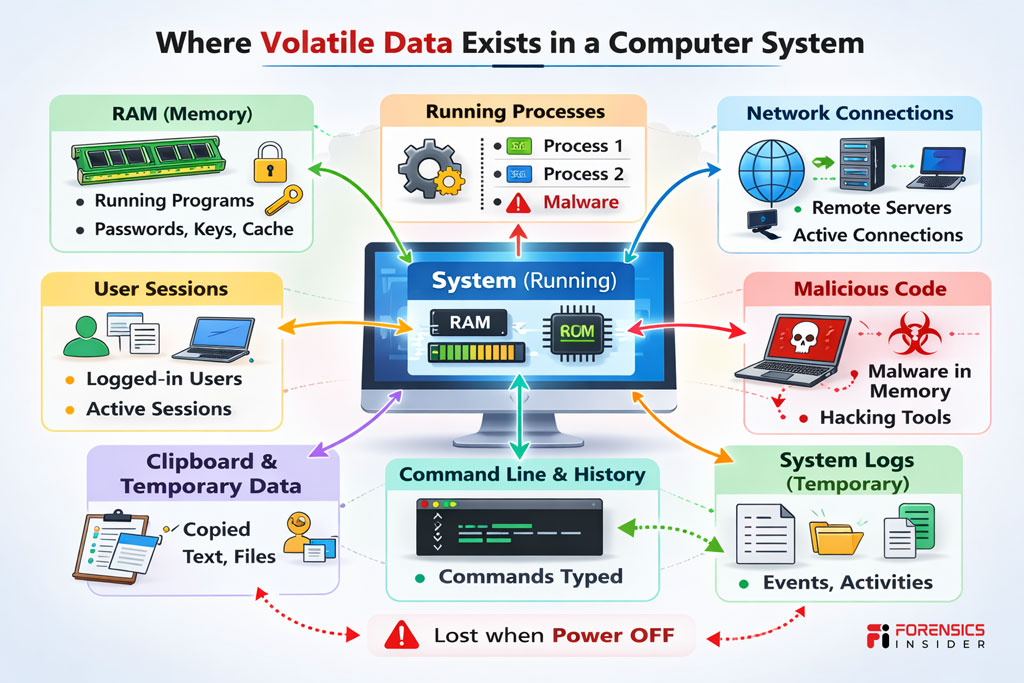

Most volatile information lives inside areas that constantly change while the system is running. The most well known location is system memory, often called RAM.

RAM acts like the computer’s short term workspace. Programs load into memory so they can run faster than they would directly from disk. While they operate, they leave behind traces of commands, passwords, and activity that may never be saved anywhere else.

Other sources of volatile data appear throughout the operating environment. Running processes show which applications are currently active. Network connection tables reveal which remote systems are communicating with the machine. Logged in user sessions can show who accessed the system and when.

Even small details like clipboard contents or command history may exist only temporarily. These tiny pieces often seem insignificant, yet together they can paint a surprisingly clear picture of what happened on a system.

Why Volatile Data Is Critical During Investigations?

Here’s where things become interesting for forensic investigators.

Many modern attacks never rely solely on files stored on disk. Instead, attackers execute malicious code directly in memory. This technique leaves fewer permanent traces and allows them to disappear quickly.

When investigators analyze volatile data, they often uncover hidden processes, active malware, or suspicious network sessions that would otherwise remain invisible. Those clues help explain how an intrusion began and what the attacker attempted to do.

Shutting down a compromised system too early can erase that evidence instantly. Once the machine powers off, the information stored in memory disappears. That lost context can make it far harder to reconstruct the attack timeline.

This is why experienced investigators treat a live system carefully. Before pulling the plug, they often capture memory and other temporary information so those clues remain available for later analysis.

Common Types of Volatile Evidence Investigators Look For

During a live response, investigators focus on several forms of volatile data that often reveal suspicious activity.

Running processes are usually the first place analysts look. These processes show which programs are currently active on the system. Hidden or unfamiliar processes may indicate malware or unauthorized tools.

Network connections provide another valuable layer of insight. Active sessions can reveal whether a machine is communicating with external servers, command infrastructure, or other compromised devices.

Encryption keys sometimes appear in memory while software is running. These keys can help investigators decrypt protected files during an investigation.

Other useful clues include logged in users, open files, and command history. Even clipboard data can reveal copied passwords or commands that someone executed recently.

Each of these pieces might seem small by itself. Together they often reveal the story of what happened inside the system.

Challenges in Collecting Volatile Data

Capturing volatile data is not as simple as copying files from a hard drive. Investigators face a few important challenges.

The biggest challenge is time. Because the information exists only while the system runs, investigators must act quickly. A restart, crash, or shutdown can wipe out critical evidence.

Another challenge involves maintaining evidence integrity. Every action performed on a live system changes its state slightly. Investigators must use careful techniques to avoid contaminating the evidence they are trying to preserve.

System stability can also become a concern. Some compromised machines behave unpredictably, especially when malicious software is still active.

For these reasons, trained analysts follow strict procedures when collecting volatile evidence.

Tools and Techniques Used to Capture Volatile Data

Digital investigators rely on specialized tools to collect volatile data safely. These tools capture snapshots of system memory and other live system information.

Memory acquisition tools create an exact copy of RAM while the system continues running. Analysts later examine this copy in forensic software to identify suspicious artifacts.

Live response tools gather information about processes, network sessions, and system configuration. These snapshots help investigators understand the system’s current state without shutting it down immediately.

The goal is simple. Capture as much temporary information as possible while keeping the system stable and preserving the integrity of the evidence.

Best Practices for Preserving Volatile Evidence

Experienced investigators often follow a concept known as the order of volatility. This idea prioritizes collecting the most fragile information first.

Highly volatile data such as CPU registers and memory contents disappear quickly, so analysts capture them early. Less fragile evidence like disk files can be collected later.

Documentation also plays a major role. Every action taken during live analysis must be recorded carefully so investigators can explain their process later in court or internal reviews.

Maintaining a clear chain of custody ensures that volatile data remains trustworthy evidence throughout the investigation.

A Simple Real World Scenario

Imagine a company detects unusual traffic leaving one of its servers late at night. Security staff connect to the system remotely to investigate.

At first glance, the server appears normal. No suspicious files exist on the hard drive. Nothing obvious shows up in traditional scans.

But a quick memory capture reveals something interesting. A hidden process is running quietly in the background. The process maintains an active connection to an external command server.

That information existed only in volatile data. If the server had been rebooted immediately, the process and its connection details might have vanished without leaving any trace.

By capturing memory first, investigators gained the evidence they needed to understand the intrusion.

Conclusion

Digital investigations often focus on files stored on disk, but some of the most valuable clues live only in temporary system memory. That is why volatile data plays such an important role in modern forensic analysis.

When investigators encounter a live system, the decisions they make in the first few minutes can determine whether critical evidence survives. Capturing temporary system information preserves clues that might otherwise disappear forever.

Understanding how volatile data works helps investigators approach live systems with patience, strategy, and the right tools. In many cases, those fleeting traces provide the missing pieces that explain what really happened inside a compromised machine.